The Forge: Knowledge That Compounds

I got tired of re-teaching AI agents the same lessons every session. So I built a system where every correction, pattern, and hard-won fix compounds instead of evaporating.

I need to tell you about the most frustrating thing in AI-assisted development. It's not hallucinations. It's not context window limits. It's not even the cost. It's the forgetting.

You spend an hour teaching an AI agent that your project uses a specific auth pattern. It gets it. It writes beautiful code that follows the pattern perfectly. Next session, it's gone. You correct a CSS approach — use container queries, not media queries for this component library. Noted, the agent says. Next day, media queries everywhere. You explain your database naming conventions, your error handling philosophy, the specific way your API versioning works. The agent absorbs it all, performs flawlessly, and then at the end of the session, every lesson evaporates like it never happened.

I've been building software for twenty-five years. I've built design systems used by hundreds of developers. I've built marketing platforms that processed millions of leads. I've shipped more full-stack applications than I can count. And across all of that experience, I've never encountered a tool this powerful that was also this amnesiac.

So I built something to fix it. I call it The Forge.

The Problem Is Worse Than It Looks

The forgetting problem isn't just annoying — it's an architectural flaw that undermines the entire value proposition of AI agents. Let me explain what I mean.

When you work with a human developer, knowledge compounds. Day one, you explain the project structure. Day two, you don't have to explain it again. By week four, they've built a mental model of the entire system. They know the patterns, the exceptions, the "we do it this way because of that one incident in 2019" lore. That accumulated context is what makes an experienced team member valuable.

AI agents can't do this. Every session starts from zero. The context window is a blank slate. Sure, you can write documentation and system prompts, but that's static knowledge — it doesn't grow, adapt, or learn from corrections. The agent reads it, follows it mostly, and still makes the same category of mistakes because documentation can't capture the nuance of real project work.

I calculated how much time I was spending re-teaching agents. Across a typical week of heavy AI-assisted development, I was losing three to four hours to re-explanation and re-correction. Not fixing bugs — re-explaining things the agent had already learned and forgotten. That's nearly half a day per week of pure waste.

For someone who spent two decades optimising workflows and eliminating inefficiency from production systems, this was unacceptable. The engineer in me saw a systems problem. The designer in me saw a broken experience. The pragmatist in me saw a solution.

What The Forge Actually Is

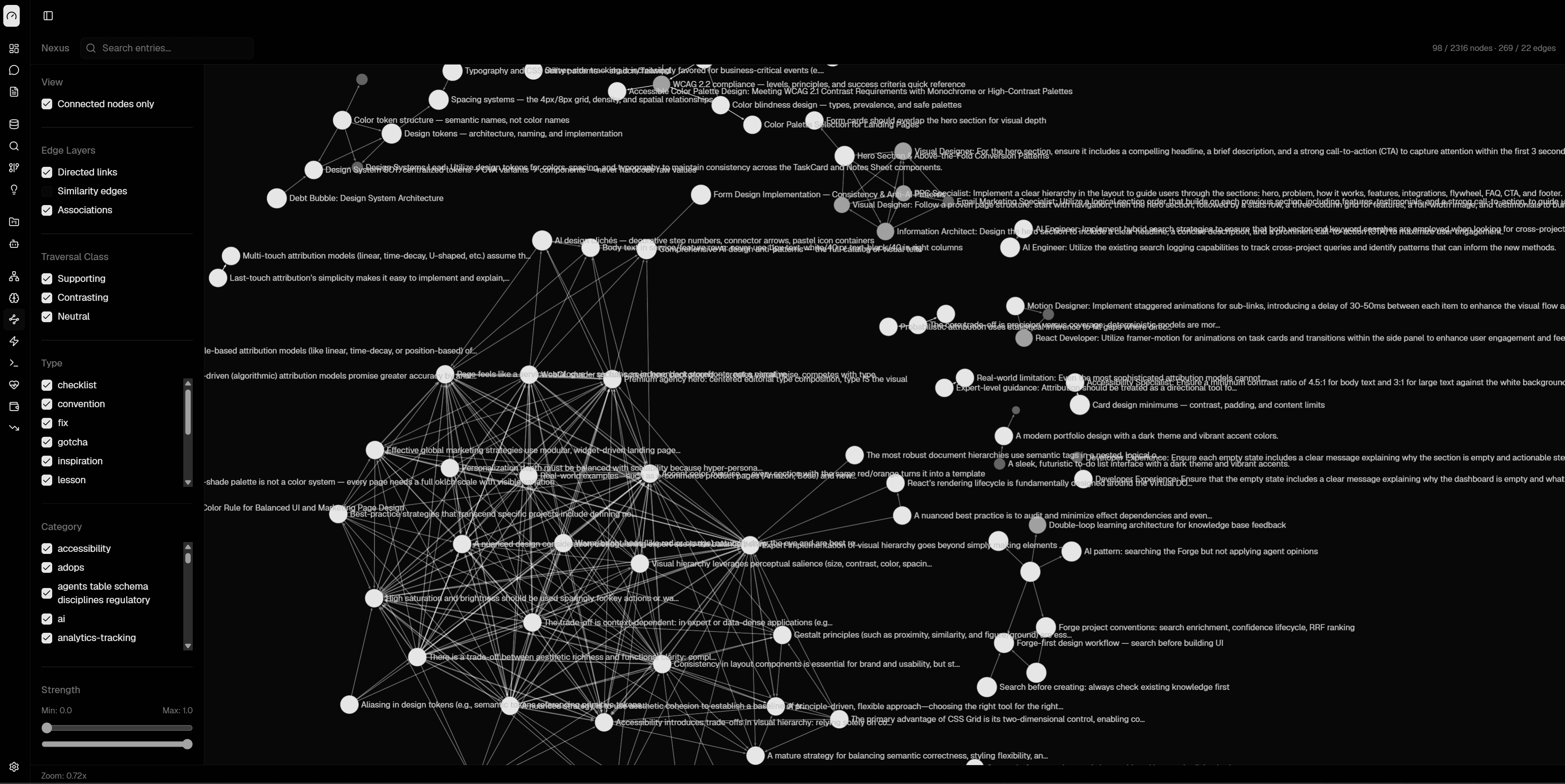

The Forge is a persistent knowledge graph that sits between you and your AI agents. It captures corrections, patterns, decisions, and workflows — and makes them available to every future session automatically. Knowledge goes in once and compounds forever.

At its core, The Forge has three operations that matter:

forge_init — You call this at the start of every session with your project key. It loads the full knowledge context for that project: established patterns, known pitfalls, architectural decisions, correction history. The agent starts every session with the accumulated wisdom of every previous session, not from zero.

forge_search — Before the agent writes code or makes a decision, it searches the knowledge graph for relevant context. Working on authentication? The Forge surfaces your auth patterns, past mistakes in auth code, related architectural decisions. It's not a documentation lookup — it's a contextual knowledge retrieval that connects related concepts across your entire project history.

forge_learn — This is the one that changes everything. When you correct the agent — "no, use the service pattern, not direct database access" — that correction gets captured, categorised, and linked to relevant concepts in the knowledge graph. It's not just stored; it's connected. The next time any task touches database access, that correction surfaces automatically.

The learning loop is the key. Every correction you make teaches the system permanently. Not just for this session, not just for this project — corrections that represent general principles propagate across projects. Specific corrections stay scoped to where they apply. The graph handles the routing.

forge_init

forge_search

forge_learn

Corrections Are the Most Valuable Data

This is the insight that shaped the entire architecture. When I was designing The Forge, I had to decide what to store. Documentation? Code patterns? Architectural decisions? All useful. But the most valuable data, by a wide margin, is corrections.

Think about it. When everything goes right, there's nothing to learn — the agent already knew the correct approach. It's when things go wrong that knowledge is created. The correction — the delta between what the agent did and what it should have done — is pure signal. It's specific, contextual, and directly actionable.

I drew on my experience building marketing analytics platforms here. In lead generation, you learn more from the leads that don't convert than the ones that do. The failure cases tell you where your model is wrong. Same principle applies to AI agents. Their mistakes are your training data.

The Forge treats corrections as first-class entities in the knowledge graph. Each correction links to the task context where it occurred, the pattern it relates to, the code it affected, and any related corrections. Over time, clusters of corrections reveal systematic blind spots — not just one-off mistakes, but categories of misunderstanding that the agent needs persistent guidance on.

A correction like "don't mock the database in integration tests — we got burned when mocked tests passed but the prod migration failed" is worth more than any architecture diagram. It's specific. It's battle-tested. And it prevents a concrete failure mode that documentation alone would never capture with enough urgency.

Key Insight

The Agent Brain

Here's where it gets interesting. The Forge doesn't just store knowledge — it routes it.

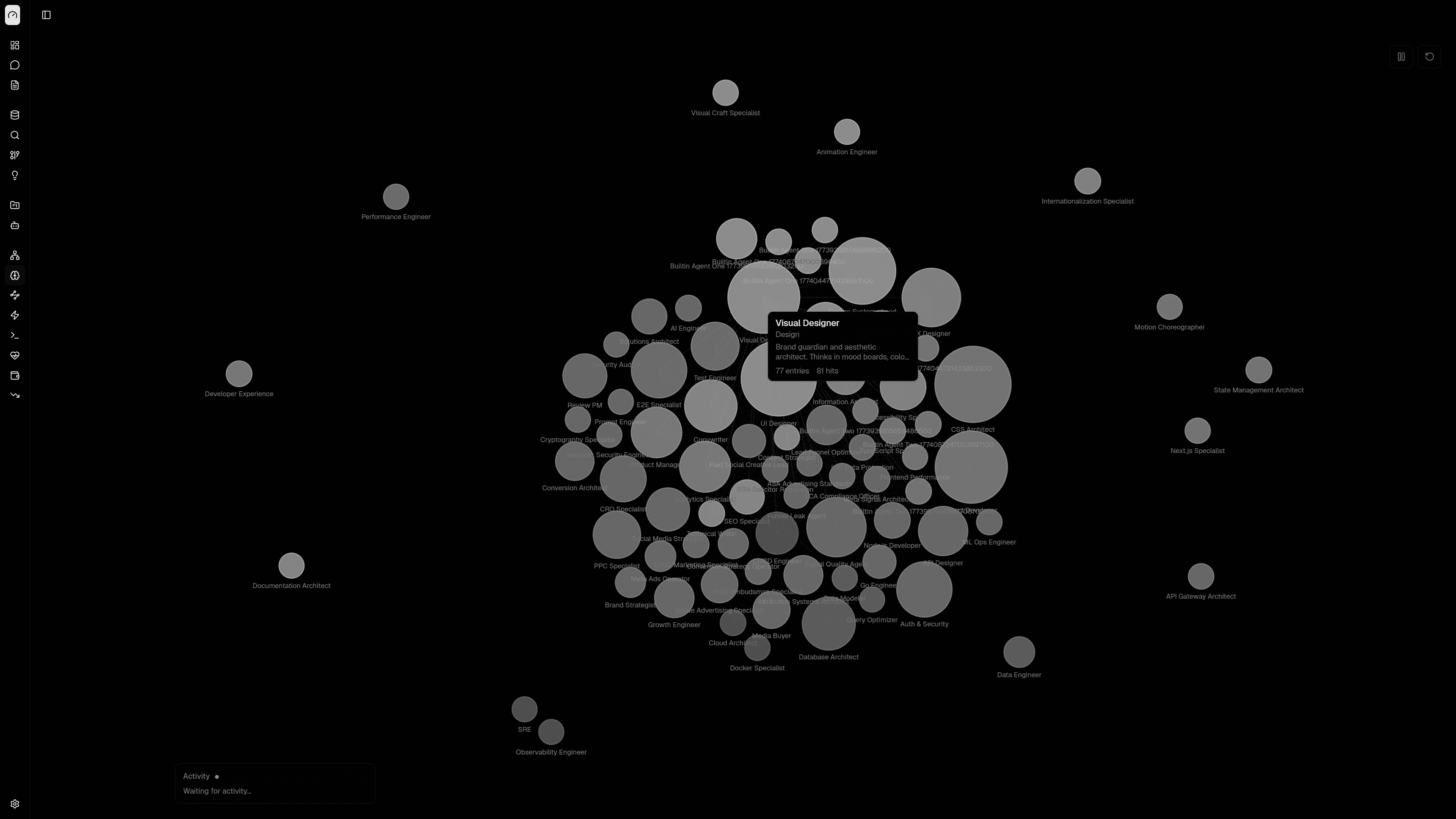

I built a system of specialised agents, each with domain expertise: visual design, performance optimisation, security review, accessibility audit, database architecture, API design, conversion optimisation. When a task comes in, The Forge analyses what's needed and routes to the right specialist — or assembles a team of specialists for complex work.

Each specialist has access to the full knowledge graph but with weighted relevance. The security agent sees security-related corrections and patterns first. The performance agent sees optimisation patterns and benchmarking results. The visual design agent sees design system tokens, component patterns, and past design decisions.

This isn't just prompt routing — it's knowledge routing. The right specialist gets the right context. A security review doesn't need to wade through CSS naming conventions. A visual design task doesn't need to see database indexing strategies. The Forge handles the relevance filtering so the active context is always high-signal.

When you kick off something complex — say, building a new landing page — it doesn't throw a single generalist at the problem. The Visual Designer, the Copywriter, and the Conversion Architect all contribute knowledge from their domains. The result is deeper than any single agent could produce alone, because each specialist brings focused expertise rather than diluted generalism.

I've been designing information architectures for twenty-five years — from enterprise navigation systems to marketing dashboard hierarchies. The agent brain uses the same principles: clear taxonomy, contextual relevance, progressive disclosure. Show the right information to the right agent at the right time. It's IA for AI.

Skills: Encoding What Works

Beyond corrections and patterns, The Forge captures skills — encoded workflows that represent proven approaches to specific categories of work.

A skill isn't a template. It's a structured workflow with decision points, quality gates, and contextual adaptations. "Build a new API endpoint" is a skill that includes: check existing endpoint patterns, verify auth requirements, follow the naming convention for this project, include error handling that matches the established pattern, write tests that follow the testing strategy, update the API documentation format.

Each step in a skill can reference knowledge graph entities. The "check existing endpoint patterns" step pulls the actual patterns from the graph — patterns that have been refined by every correction ever made on API work in this project. The skill stays current because the knowledge it references stays current.

I built this because I was tired of writing the same instructions in every prompt. "Remember to use our error handling pattern. Remember the naming convention. Remember to add tests." Skills encode all of that into a reusable workflow that the agent follows automatically. I define it once, the corrections refine it over time, and every future session benefits.

The parallel to my design systems work is direct. A design system doesn't just document components — it encodes decisions. Spacing scales, colour semantics, interaction patterns. Skills do the same thing for AI workflows. They encode decisions so you don't have to re-make them every session.

The Compound Effect

I've been using The Forge for weeks now, and the compound effect is tangible. It's not a subtle improvement — it's a qualitative shift in how AI-assisted development feels.

Week one was mostly seeding. Lots of corrections, lots of forge_learn calls. The agent was still making mistakes, but each mistake only happened once. By the end of the first week, the knowledge graph had about two hundred entities — patterns, corrections, decisions, relationships.

Week two, I started noticing something. The agent was anticipating corrections before I made them. Not because it was smarter — because forge_search was surfacing relevant corrections before the agent wrote code. "Based on previous corrections in this project, I should use the service pattern here rather than direct database access." It was reading its own correction history and applying it proactively.

By week three, the agent felt like a team member who'd been on the project for months. It knew the patterns. It knew the exceptions. It knew the lore. Not because it remembered — it's still stateless — but because The Forge gave it access to the full accumulated context of every previous session.

Tip

The time savings are real and measurable. Those three to four hours per week I was losing to re-explanation? Gone. Not reduced — gone. The agent starts every session with full project context. Corrections from last Tuesday inform code written today. A pattern I established in the first week is consistently followed in the fourth week. Knowledge compounds instead of evaporating.

Why I Built This Instead of Waiting

People ask me why I built The Forge instead of waiting for AI providers to solve the memory problem. Fair question. OpenAI has memory features. Anthropic is working on persistent context. Why build my own system?

Three reasons.

First, control. My project knowledge — my architectural decisions, my patterns, my hard-won debugging insights — lives in my system, not in someone else's cloud feature. I can inspect it, edit it, export it, version it. It's a knowledge base I own, not a feature I rent.

Second, structure. Provider memory features are typically flat — a list of remembered facts. The Forge is a graph. Concepts link to related concepts. Corrections link to patterns link to decisions link to code contexts. That structure enables relevance-based retrieval that a flat memory can't match.

Third, compounding across projects. General principles I learn on one project — "always validate webhook signatures," "use optimistic UI updates for perceived performance," "never trust client-side date formatting" — propagate to other projects automatically. Provider memory is typically scoped to a single conversation thread. The Forge is scoped to however I want to scope it.

What I've Learned Building It

Building The Forge taught me something about AI that I don't see discussed enough: the gap between AI capability and AI usefulness is a tooling problem, not a model problem.

GPT-4, Claude, Gemini — these models are extraordinarily capable. They can write complex code, reason about architecture, debug subtle issues. The capability is there. But that capability resets every session. It's like having a brilliant contractor who shows up every morning with complete amnesia. The talent is real. The continuity is zero.

The Forge is a continuity layer. It doesn't make the AI smarter. It makes the AI's intelligence persistent. And persistence, it turns out, is the difference between a tool you use occasionally and a tool that transforms how you work.

I've spent my career building systems that compound — design systems, pattern libraries, marketing engines, knowledge bases. Every one of those systems followed the same arc: painful setup, gradual accumulation, then a tipping point where the accumulated knowledge started paying dividends that far exceeded the ongoing investment. The Forge follows exactly the same curve. The first week is an investment. The second week breaks even. By the third week, you're getting returns you didn't expect.

That's not a product pitch. It's a conviction from twenty-five years of watching knowledge evaporate from teams, projects, and tools — and knowing it doesn't have to. Stop re-teaching. Start compounding. Every correction is an investment. Every session builds on the last.

The best AI workflows aren't the ones with the smartest models. They're the ones where knowledge compounds instead of evaporating.